We’re now a few days into April and our team has been working for Steinberg for five months (we started work on 5 November last year, which happened to be my birthday). Although I can’t share lots of details about what we’re working on, perhaps a few details of what we’ve been up to will be intriguing enough to be interesting.

November

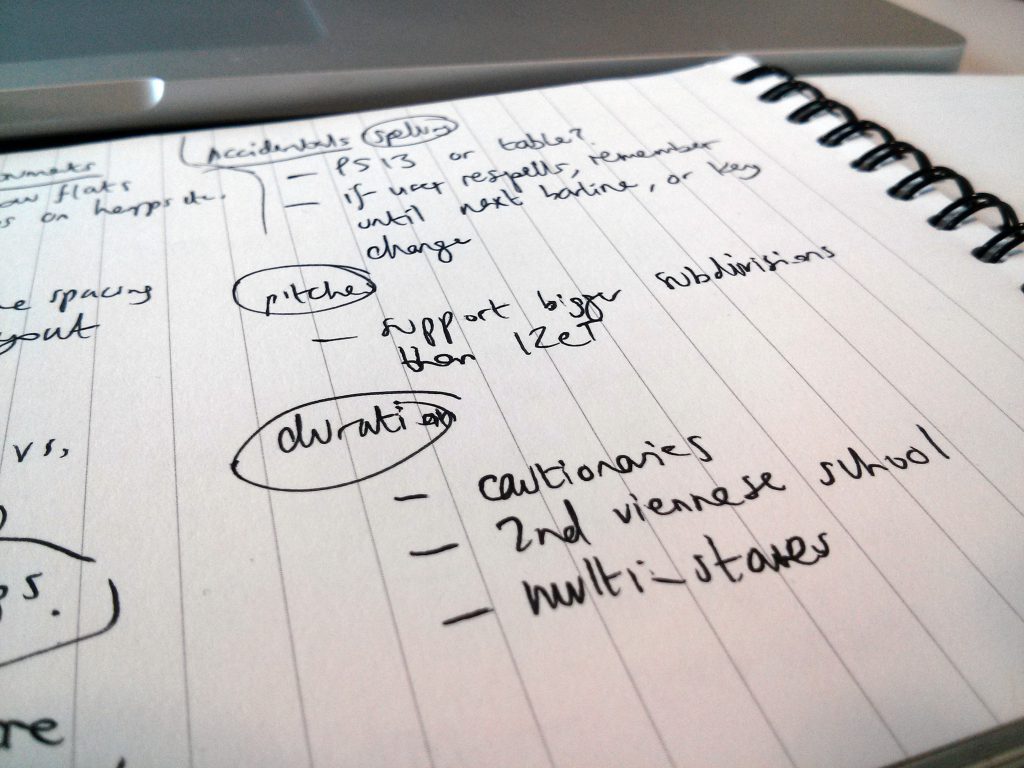

With the exception of a visit to Hamburg to get to know some of our new colleagues in the middle of the month, for the rest of November we sat together in our temporary office with our notebooks on our laps, having a series of discussions about different functional areas of our future scoring program, essentially a big brain dump of the requirements we could remember from our days working on that other famous scoring program, and ideas we have about how we could improve each area.

Some really good ideas came out of those discussions, and indeed by the end of the month we had already settled on the basic conceptual model for how our new program would think about music, and how we could design the program in such a way that it would provide greater flexibility and freedom to composers and arrangers than other scoring programs.

Once all of us had filled our notebooks, we started transferring our notes to the internal wiki, fleshing them out and categorising them. By the end of the month, we had a pretty good high-level list of requirements, spanning more than 60 different functional areas. With all of these notes now safely transferred to our collective outboard brain, we were free to move on to the next challenge.

December

Having spent a whole month with the whole dirty dozen of us sitting together every day, we now started to divide and conquer: the testing team started generating test data (in MusicXML) based on the requirements we had discussed to date; the programmers started sketching architecture ideas on whiteboards; and my partner in crime Anthony (@anthughes) and I started discussing how we would start designing the user interaction and design of the program.

We also started going out to meet with music professionals and publishers. I was invited to attend a meeting of the Music Writers’ Committee at the Musicians’ Union, which led to our group discussion and brainstorming session a couple of months later, and along with Anthony and James (one of our wonderful programmers) we went out to meet with the editorial and engraving staff at Peters Edition and Boosey & Hawkes.

Anthony and I also went up to Amersham to meet with Bev Wilson, a very experienced engraver who worked for nearly thirty years for Halstan, which until the 1990s was one of the busiest music engraving houses in the UK. Halstan’s approach – which they called The Halstan Process – was unusual in as much as it was based on photographic reproduction of over-sized positive originals, produced by brushing ink (from a water-soluble ink block) through metal stencils onto white paper. For large-scale orchestral or band scores, engravers would sometimes have to work with the top of the page flipped up, over their heads and behind them to avoid it trailing on the floor in front of their desks! You can see and hear Bev talking about The Halstan Process in this video, produced for the Open University:

We were very interested to talk with Bev because we wanted to examine music engraving from first principles, not assuming that we knew how music spacing should be done just because we had previously worked on another scoring program. These days, Bev still works freelance as an engraver, mostly on choral music for Oxford University Press, though of course now he brings his experience to bear on his work using Sibelius rather than ink and stencils.

January

In the New Year, I started drafting the design for our application’s default music font. In keeping with our general approach to look further back than the computer engraving of the past 25 years or so, and to examine what was done before even music typewriters and other such short-lived technologies challenged the way that music had been prepared for publication for the preceding 200 years, I canvassed some experienced musicians to determine which scores from particular publishers in particular eras they especially liked the look of. Some of the more experienced engravers remembered the dry transfer system Not-a-set, which was used for a couple of decades after traditional engraving was deemed to have got too expensive and before computer engraving was capable of producing acceptable results.

Not-a-set was based on a set of engraving punches used by Schott, in turn based on the punches used by Breitkopf & Härtel, the world’s oldest music publishing house. Not-a-set would serve as an excellent starting point for a new music font, because the symbols are printed on the dry transfer sheets unencumbered by staff lines, so you can really examine the shapes of the symbols very closely in order to draw them in a vector drawing or font program.

Getting hold of Not-a-set wasn’t easy, since it hasn’t been used by anybody in anger for more than 20 years, but thanks to the generosity of Bev Wilson in the UK and Peter Simcich in the US, I was able to scrape together enough examples to be able to produce digital versions of the majority of the basic symbols.

When compared with, say, Opus, the look is more substantial, and in general the music appears a little bolder and blacker on the page, which aids legibility when reading at a distance.

Anthony, James and I also continued visiting music publishers, including a meeting with Paul Tyas and Elaine Gould, author of the wonderful Behind Bars, at Faber Music, and I also had a couple of very productive meetings with professionals working in the field of musical theatre, to get a feel for the specific requirements of people working in that field.

February

As the winter months dragged on, we kept warm in our basement office by slaving over our hot keyboards. The testing team had by now generated dozens, if not hundreds, of test cases in MusicXML format, exporting them from other scoring programs and stripping out data that doesn’t serve our purpose via XSLT, in some cases having to hand-code specific details because the MusicXML exporters doesn’t handle them, or indeed the scoring program itself doesn’t handle them.

Meanwhile, the programmers had started to build the lowest levels of the musical brain of our new scoring application, including fundamental things like deciding how pitch and duration of notes will be stored. One of the programmers spent a couple of days building a simple piano roll event view able to display pitch and duration of notes – but still no actual music notation display at this point. The simple piano roll display and the low-level engine were lashed together into a test harness application, which could import certain primitive types of data (notes, but not even rests at this point) into the low-level model. The very first steps had been taken!

I carried on working on music font design, and soon discovered that I needed to take a step back and consider how the font itself would be set up. Over the course of several weeks, I surveyed many existing music fonts, the Unicode range for musical symbols, and the standard texts on music notation to try to create a categorised list of all of the symbols used in Conventional Music Notation (Donald Byrd’s term). Although this work is far from complete, I have now built a list of around 800 unique symbols, divided between nearly 60 different categories. I will write more about this mapping and how I hope it can become a new standard for people who want to design music fonts in future.

Anthony and I also spent considerable time locked away in the broom cupboard-style meeting room in our temporary office discussing further user interaction principles. Being able to think freely about how every aspect of the application will work without worrying about having to fit features into a mature application with well-established idioms for interaction is incredibly liberating, and I am confident that we are coming up with better, simpler and more efficient workflows for inputting and editing notes and other musical objects in your score.

March

At the very end of February, we left our temporary home near Kings Cross and moved to our new permanent home a couple of miles to the east, a short walk from Old Street station. We now have plenty of room to spread out and, hopefully, to grow our team in the future.

The burgeoning test harness for our new program’s musical brain is now able to display real musical notes! It’s only a single line of notes, only the rhythms are displayed rather than the actual pitches, and the spacing is crude, but as a demonstration of the next steps in the musical brain’s understanding of music it’s an exciting moment: from the fundamental way in which pitch and duration are stored we can now see notes of the correct duration that can reformat and reflow themselves as they are edited. Baby steps, but important ones.

Another important step was the first few functions in our application’s scripting API. At the moment we’re using Lua, since it is highly portable, small, fast and efficient, well-suited to embedding, and already used with great success in a number of high-profile applications (including Adobe Lightroom) and game engines (the middleware used by developers to handle 3D graphics, physics, game logic etc. in games for consoles and PCs). The hope is that we will be able to deliver a fully-featured scripting API that will allow users of our application to create sophisticated scripts that can add valuable functionality to our application. Early investigations of using the Koneki IDE to write and debug scripts running in our test harness applications are promising, which means that script developers should have a very comfortable development environment for writing scripts for our application.

To help our testing team verify that the musical brain is doing the right kinds of things when performing simple editing operations, we’ve also started work on exporting MusicXML files from our test harness. Data can now be imported into our test harness via MusicXML, and then exported again, making it possible to see what is happening to the music in another scoring application.

By the end of March, I had completed first drafts of all 800 or so musical symbols that will be included in our new music font, and once our application is capable of displaying more than simply note durations, I will be able to iterate those designs and get more and more of the font looking great.

And the next five months…?

It’s too early to guess what the state of our burgeoning application will be in another five months. We are taking our time over the fundamental design decisions because we want to make sure we build the most forward-looking, flexible system possible. I will keep you posted!

Wow, this is gonna be amazing! 😀

Great news! I hope that note durations will be well coded, so we will be able to copy/remove notes in tuplets and copy lines or dynamics on them… (maybe the unfeature of Sibelius that annoys me the most)

It is very interesting to read how the early development is taking place. Thank you for sharing this. Something I have wondered if you are considering is building in some form of music reader that could be used on large tablets in performance. I had not felt that small iPad sized devices were really viable but in the last few weeks I have noticed the emergence of a new breed of large tablets that could be ideal.

I’m thinking of something like this https://www.asus.com/AllinOne_PCs/ASUS_Transformer_AiO_P1801/

There seem to be various pdf based systems at present but I notice that most require a 3rd party annotation app of some kind so that the user can mark up the music.

I had wondered if something could be built into notation software that would permit touchscreen annotation but maybe also permit the setup of permissions. I can imagine a conductor or librarian ‘allowing’ a performer to move a rehearsal number for instance. A system like this would have so many benefits including for example the merging of preferred bowings. An orchestral library might even save several versions of the same work with markups from different conductors and players.

I’m sure you may have considered the many benefits of such a system but for the first time large, wireless tablets with good battery life would seem to make professional usage a real possibility

@Walter: We would love to build a reader application for mobile devices, for sure. I don’t know if we’ll have something ready at the moment the first version of the scoring application itself is released, but we’ll see.

An unexpected pleasant surprise to see this blog. Full of info, transparency and good will. Thanks to you Daniel and Steinberg for the promise of something positive out of the ashes of Sibelius.

On to the rest of the articles…

I’m looking forward to this, the new sibelius 7 support team don’t seem to be able to help me with a crash-on startup bug.

Sorry to hear that, Axel. My old friend Sam Butler is still providing sterling support for Sibelius at Avid, so do try contacting him directly.

Thank you! I will contact him right away!

I can take this moment to make a wish sine you’re online!

One thing that I mentioned to you a while back was a problem (In another “well know application…) with woodwind and brass effects (doit, fall…) being to loud in Sibelius. It was improved in a patch after my note on that but it didn’t go the whole way of solving my problem.

I know that a fall is traditionally supposed to be louder than other notes. What I want though is the ability to make a fall that does not change in dynamic or starts at the dynamic notated.

I know that this is a very small detail and it’ll take months before you start working on that but I just wanted to mention it!

Thank you!

Fascinating to read this — I’m a die-hard Sibelius lover through and through, but I’d hitch my wagon to this new train in a heartbeat. Thanks for sharing!!!

Very exciting, Daniel. Looking forward to the launch of this new application. Can’t wait to try it out!!

You had me with “API”

You have the best username I’ve ever come across. How appropriate ….and.what humour!

Take your time with this, Daniel – take your time. My impression is that you want to create a musical notation program that will be able to do everything from Fernybough, Johnston, and Delius all the way to Gregorian Chant. One will have to incorporate every musical-notation oddity imaginable. I want to be hypothetically-able to transpose Feldman’s String Quartet No. 2 up a half-step.

800 symbols, already? There must be literally thousands out there waiting to be discovered to add to your default font. Leave no stone unturned!

Music in a circle!

http://www.generatorx.no/20060703/bibliodyssey-more-musical-notation/

The Emperor says, ‘Too many notes!’

http://www.darkroastedblend.com/2007/02/we-dare-you-to-play-these-scores.html

Admittedly a joke piece…

More scores and examples

http://homepage1.nifty.com/iberia/score_gallery.htm

I’m sure you’ve looked at all of those pages. If you can create a program that can notate these bad-boys, your new technological terror should be able to tackle anything.

Thanks so much for everything you guys are doing. Sometimes miracles do happen. Here’s to hoping the guys wearing suits do not hold you back or make you and the team have to compromise.

Thank you for this very enjoyable notation examples!

Heck, I’d love to be able to notate *all* this stuff natively without resorting to weirdo workarounds and ‘fooling’ the product to do my bidding.

Very Exciting. Godspeed, and let us know if there’s anything to be done to help.

This is hugely exciting stuff. So you effectively get the chance to treat all your previous work as the “prototype to throw away” 😉

I am still a Sibelius user, but I’ll happily add my voice to the “switch-in-a-heartbeat” chorus. Although I love the product, I loved the community at least as much, and your enthusiasm and patience on the Yahoo Sibelius group was amazing.

All the best with this wonderful project.

All good news, Daniel. Wish I’d known you were looking for Notaset, got loads here and I don’t expect I’ll have much further use for it…

Good luck with the next five months! It is very exciting.

Concerning the flexibility, I’m sure it could help to be able to edit the music while playing back at the same time, and not having this distinction between an edit mode and a playback mode.

For example it would be great to be able to loop a section while editing it (like in Cubase).

Flexibility could be improve too in terms of tuplets, like copying a part of a tuplet and crossing a barline.

Stoked to hear the dedication and progress, I’ll be the first customer even though i’ve been a “Sibling” since 2001

Looking forward to your next article! Thanks you guys!

Terrific story. I have many pages of Not-a-set, left to me when a close friend, a composer, passed away. If they’d be any use to you, you’re welcome to them.

@Bob, I would love to have those sheets if you would be able to send them to me. Thank you!

Very excited to read you’ve been consulting with musical theatre pros. Good luck Daniel. Lookig forward to the next installment of ‘Making notes’!

Daniel, I always look forward to reading your blog posts over a cup of coffee. Thanks for taking us behind the scenes!

As a longtime FINALE guru, I applaud what you are doing. I was never a SIBELIUS fan as its first iterations were too ACORN-ish and followed neither WINDOZE or MAC procedures.

As a FINALE user from 2.6.3 onward, I am very aware of the underlying code that will reach out and bite one unawares at frustrating moments. I mention this as you design from the ground up. A code whose weaknesses can be back-corrected would be a wonderful way to proceed into the future.

Also, I am an outlier on the MusicXML list and monitor Michael Goode and his cronies as they discuss the future of this very valuable tool.

On another note, I am absolutely convinced that SHARPEYE is the only music scanning program that is worth using. I see that Graham Jones is in on some other projects, but nothing yet holds a candle to SHARPEYE for useful music scanning.

Finally, as an editor of obscure music that was edited only by the ears of the performers, The ability to hear precisely and clearly a score is a ery high priority. I expect your Steiberg friends to supply this;-)

Daniel – great update; thats a pretty solid five months I’d say..!

One thing occured to me when you started talking of ‘game engine’ developers; are you thinking to build-in/include a (maybe future) option to allow certainly the applications GUI to make use of the huge (read underused..!) amounts of GPU power on board most systems these days..?

I’m reading of this ‘technique’ being used more and more in graphic or video edit type programs – yet to catch on in the DAW or scoring applications world…

Food for thought…

@Bob: Yes, there are APIs like OpenCL to run general computational tasks on GPUs and other similar processors. Our colleagues in Hamburg are very adept at wringing every last drop of power out of today’s hardware for playback in Cubase, and I daresay this is something they are looking at. We’re hoping to piggyback on their hard work for the playback features of our new application.

I found a couple of things interesting!

I’m most impressed with the transparency, and hope that there are regular installments to your progress,

I am fond of the idea that the program may someday find its way to serving as a electronic music stand on a tablet, though ancillary ideas like this might bog down less experienced designers, I’m not at all concerned in this case.

And, like several other long time “siblings: (love the term), I’ve been using that product from the beginning, but I’m most willing to move along with those that were the true heart of the product, rather than jump ship with the current owners.

Daniel,

I’m excited to see that your looking beyond simple 12TET. I hope your considering using Ben Johnston’s notational systems for just intonation into the new program. What would be helpful is the ability to apply a hidden message to a note that allows it to be retuned in playback. Having this system work in cents would be ideal. You can approximate this in Sibelius now with midi messages, but it applies to an entire staff, and you must translate cents in to the 0-127 midi system. It also lacks the precision of tuning in pure cents.

Great article, I look forward to seeing and hearing more in the future.

Jude

I find the idea of just intonation as an option most intriguing.

If it could be done easily (given the exigencies of sound libraries and so on) it could occasionally come in handy.

But it would come fairly well down in my wish-for list.

Fantastic update Daniel, and I look forward to future postings with great interest. Also really delighted that you are really getting to grips with the requirements of musical theatre. I am beta testing a series of new plugins instruments for an American company at the moment as well – (pro tools and logic being my test platforms) under of course an NDA . . .

I’m very interested to read about the thought you’re obviously putting into font mapping. I very much look forward to seeing if the eventual implementation avoids the sprawl of fonts Sibelius has ended up with (I’ve lost count of how many “Opus” fonts there are now!), and integrates more tidily with the Unicode standard (e.g. by respecting currently standardised subsets).

@Thomas: I will post more about my work on designing a new font mapping soon, but there will certainly be just one font: these days, there’s no need for 18 different fonts (that’s how many are in the Opus family right now!), though the trade-off is that you lose the ability to type glyphs in text easily, because they all live at code points that don’t correspond to keys on your keyboard.

I like that, but there could be some glyphs one might desperately want to insert, using either Character Code or Alt + ‘0123’ entry protocol.

I sometimes use unusual glyphs from the expanded Cambria range used for text in IPA (oh, orright! International Phonetic Alphabet), and two projects this year or next would benefit from that sort of thing.

@Daniel: Ralph mentioned the use of the IPA glyphs. I once thought about a project to edit arias and songs which are postulated with auditions of singers in opera together with a well-known former german GMD (chief conductor of an opera house), using these to give students the opportunity to study the right pronounciation from the very beginning.

My idea for your program: If you see any possibility to add dictionaries (including the possibility to hear all pronounciations) and IPA glyphs in the text font which will be supplied with your program – that could be very helpful in digital editions for the international singer and music student!

Of course, to be able to have choir sound libraries sing in right syllables and pronounciations for the main languages, controlled by a notation program – that would be fantastic, yet probably the work of hundred programing years…

I just grappled with the Sibelius 6 to 7 update: A whole bunch of pointless alterations to the layout and accessible boxes that the previous teams had developed from 1-6.

We are so so clever. Word 97 and Sibelius 6 die hard here; Not gonna buy yours unless it is soooo much better

‘Pointless’?

That would do for this response, rather than for Sib 7.

‘Previous teams’?

Same team.

Wow, fantastic to read about these developments. Being kept up to date in something we all care deeply about, in such a transparent manner, is something not often seen today. Thanks Daniel for your open and honest communication. I’ll be looking forward to the next 5 month post! (And all the others.)

I can’t imagine the depth of design that must be going into this. I’m a composer and have a family background in typography. This is going to be interesting. Congrats for such a stroke of luck as to be forced to explore new territories.

I hope you’re keeping its internal architectures open to the eternally expanding advances in musical thinking. I heard some great stuff the other month using interpolated time signatures. No telling what’s out there that no one’s been able to write down properly!

@Rick: Can you tell me more about what you mean by an interpolated time signature, or point me in the right direction?

I’ve just spent two hours digging through NPR’s audio archives looking for the article I was talking about. Sorry, no result.

Summing it up though, the software allows for moving from one time signature to another over time. Imagine the move from 5/4 to 4/4 being stretched over 30 seconds and the gesture’s shape controlled along a bezier curve or a fader.

It was an effective demonstration using a dance track and, despite the “Right-Brainyness” of it, physicality was the effect. During the program I imagined how hard it would be to conduct or to write it down and it reminded me of when I was trying to superimpose time signatures in old versions of Finale.

Which was actually my point, (not just the example, but it’s a great idea). Finale’s underpinning architecture would not allow, what was at the time, a standard tool of modern compositional technique. If you wanted to have a violin in 3/4 and one in 5/4, Finale couldn’t handle it. So testing out your ideas in real time (orchestral concrete) wasn’t an option. I wasn’t familiar with Sibelius at the time, so I can’t comment about that in particular. Some composers’ solutions was to go back to paper (good, but not the point here), used tape/disk (also good, but also not the point), or dropped the idea to fit their software’s limitations (bad response).

It’s just that now, you guys are making decisions that will effect the future boundaries of your project, and likely many composers’ as well. It’s an exciting time. I’m Looking forward to seeing and hearing what comes of it.

Rick

I neither understand nor feel the need to understand all the Information Technicalities in Daniel’s post.

The musical stuff is a different story, and I like it a lot, particularly the commonsensical back-to-basics approach, and the idea of working at a comfortable cruising speed.

Another thing:

I’m more or less cheerfully working up a piano score of a 50′ piece for solo voices, chorus and chamber orchestra.

I greatly appreciate the relative facility with which Sibelius 7 manages this.

I use Reduction of 2 or 3 staves to one, and of 4 or 5 to two-handed keyboard.

Tuplets don’t work very well, of course, and the reduction is sometimes quixotic, with notes omitted or in the wrong octave.

I hope that in the new programme (for historical reasons I think of it as ‘Steinbach’), this important reduction option will be retained and its output improved.

I don’t care a damn for any of the automatic arrange options, in the same way that I don’t go much on taking to a caged canary with an automatic 12-gauge shotgun, and sincerely hope that they will be well down the feature development list.

Daniel – glad I was able to give you info via email a little while back about the high level things I thought would be interesting to incorporate from an architectural perspective.

But I can’t overemphasize the important of an interface. This product should support artists who could be highly technical people or are not technical at all. If a single interface can not accomodate all the customers, then two or more interfaces may be more appropriate – and I mean pre-designed canned interfaces (not a single customizable one as is often the done).

I spent over 20 years with a very large oil company specifically tasked to look at software interfaces among other things and my experience in that era led me to personally conclude that many of the software that succumbed to their competition or other market forces did so primarily to a poorly designed interface or one which did not adopt over time to the needs of its users and market in general.

And let me also emphasize something crucial that to this day most software vendors fail to do. As far back as the late 80s and early 90s, one of the reasons for Microsoft’s success was its ‘usability lab’. You probably know as much about it as I do so I will just state its importance and not merely a ‘beta test’ with users inside and outside of your company.

Joel

@Joel: We’re a long way from having a presentable user interface in our application itself, but as a first step Anthony and I are working on paper prototypes that we will test with people outside of the team to check that we’re going in the right direction. It’s certainly very important to us that we get the interface right.

*cough* once again: always available for testing *cough*

This. Exactly this. 🙂

@ Ralph Middenway

„Vorsprung durch Steinbach“

😉

Thanks for keeping us updated. I can’t just imagine the amount of work it is taking. But I believe you are the right team to do it.

Hi Daniel,

I always read these updates with great interest and I really look forward to the final product whenever it is finished.

I don’t know much about Not-a-set, but I believe the engraver in this video might be using it. (Where he is dry-transferring clefs and notes from a transparent sheet.) Might be useful or interesting to you.

Classical Music Publishing & Engraving 1984

http://www.youtube.com/watch?v=SvqZs6xv0DI

Please consult composers as well as engravers in your research, as they both have very different needs.

Finally, please read the following PDF if you haven’t already. It talks about the difference between the semantic-oriented model of notation and the graphics-oriented model, and how the greatest flexibility can be achieved by accommodating both models.

NOTEABILITY – A COMPREHENSIVE MUSIC NOTATION EDITOR

http://quod.lib.umich.edu/cgi/p/pod/dod-idx/noteability-a-comprehensive-music-notation-editor.pdf?c=icmc;idno=bbp2372.1998.306

(Keith Hamel might be an excellent person to consult with, actually.)

Thanks for listening!

@Jeff: We are certainly talking to potential users of all types, don’t worry: we will certainly not overlook the needs of composers. Thanks for sending a link to Keith Hamel’s paper; I’m familiar with Noteability Pro but hadn’t read this paper before. The approach Noteability takes with its structural and non-structural elements is itself not dissimilar to how e.g. symbols work in Sibelius – i.e. that you have an object that has a fixed rhythmic position and is offset (either horizontally or vertically, or both) from the staff – but with greater flexibility of the graphical appearance of those items. Noteability is certainly very flexible, and hopefully our application will marry a similar level of flexibility with a more refined graphical appearance.

I love this documentary even more: http://www.youtube.com/watch?v=m5uPPJj_M_o

What an amazing craftsmanship – is this really possible??!! You can see how poor humanity has become…

Yes, very excited by the sound of all this – long time fan of Sibelius -but I can’t wait to get my hands on this. I agree with a previous comment that tuplets could be better handled than in Sibelius but one thing that drives me crazy is the octave default – can I put in a plea to add some intelligence into which octave the next note defaults to? The Sibelius default of “the nearest note” is too simplistic and is wrong at least as often as it’s right.

@Ian: If you have any suggestions for the kinds of rules you would like our program to follow for determining the octave of the next note, do let us know.

Wow superfast response to the comment Daniel, thanks – I hope the program is that quick!

I think the “nearest octave” rule is a good starting point but I can think of three situations that should override it: (1) if this would take the note outside the range of the instrument (2) if there’s a clear pattern of notes which the program could notice: The simplest example would be a repeating pair of notes, say C-G-C-G – the “nearest octave” rule would get the C wrong every time. But perhaps the system could be smart enough to notice repeating motifs in the piece (eg up-a-thrid, down-a-sixth) (3) if the user corrects the same octave choice several times in a row (eg the system keeps picking C5 and the user keeps correcting it to C4).

And actually while we’re at it, similar arguments apply to note length defaults: if you’re in 4/4 time and you’ve just written a dotted quaver the next note is much, much more likely to be a semiquaver than another dotted quaver, etc.

Thanks Daniel – really looking forward to the next update.

Might I suggest a somewhat less sophisticated solution? Two mere optional keyboard commands, placed comfortably near the area of keyboard note entry, would enable the user to override any default value with a compulsory upwards or downwards motion.

Daniel. Great you keep us posted on the development of hopefully a great notation programme. Can you tell us more on the integration with Cubase? What are the goals?

Good work Daniel, can’t wait to see the outcome. I’ve been a Sib user since 1997 but would also switch to your program straight away if it is as good as promised.

Can I just put in a plea that grace notes be a lot better than in Sib – that has always been a massive annoyance. Thanks!

In case anyone is interested, there are Lua development tool installers ready to go at this site:

http://www.eclipse.org/koneki/ldt/

So no need to install Eclipse first and then try to install the Lua plugin (which doesn’t currently work per the install instructions).

This is great!

Is there somewhere that you and the rest of the team are taking “requests” for features users would like to see in this new software? If not, will you be in the future?

Also, how much are you thinking about 20th/21st century notation techniques and trends?

One of the things that bothers me about all notation software is that there is no direct way to notate some things that are becoming more common. I understand though, from reading around the various internets, that a lot of this has to do with keeping each version user-friendly/backwards compatible with previous versions.

I’ve seen some speculation that this has become an opportunity to address this.

Thanks! I’m very excited to see this new software develop!

Mike

@Mike: I’d be interested to know which particular techniques and trends in particular you’re thinking of. Feel free to email me with more details.

I was teaching a course where students wanted to re-create other types of musical notation. While FINALE and SIBELIUS can do some of this, it seems to me that Adobe ILLUSTRATOR or another graphics program would better suffice.

Great to read about the progress Daniel … keep up the good work. I am sure it is going to be a wonderful program.

Thanks Daniel for sharing your work.

I’m deeply impressed with the (how to name it?) professional humility revealed by the words “we wanted to examine music engraving from first principles, not assuming that we knew how music spacing should be done just because we had previously worked on another scoring program”.

We all can expect a wonderful achievement from a team so determined to revise the first principles of their work!

Thank you

Probably one of, if not the biggest selling points for me with Sibelius is the Sibelius SoundSet project developed by Jonathan Loving, making integration between Sibelius and many of the leading sample libraries seamless. I believe Notion comes inbuilt with integration between important sample libraries such as EastWest. I think for Steinberg’s new product, this is now an absolutely essential requirement, without it, I really can’t see myself migrating to it however much I would love to. Have your team already given any thought to this?

You’d better ask me to come and make the coffee! It sounds as though you’re all too busy with both hands on many keyboards to pick up a “mug of”. Keep at it lads, and great to hear you’re in the locality where it all started in the first place, and not in some obscure location where the order of the day (and night) seems to be able to down vodka in a nanosecond.

Concerning fonts and notation: as a former Kapellmeister I loved the look of the “Neue Mozart Ausgabe” most of all editions I knew. As I know, it was partly written with Score, but I am not sure.

You mention a bolder and blacker appearance, which helps legibility. I ever found that an appearance which is not so bold (like the NMA) helps rather more with legibility – even when I had to read scores at the conductors stand in the pit, where the light is rather dim.

Concerning your example with the treble clef: the resolution of the scanned image seems to be quite low, and the corner/angle in the upper left is – in my impression – rather unattractive. One probably should not “streamline” to exactly from sanned glyphs to computer generated ones – in my opinion very well noticeable in your treble clef example (I just tried to paint a treble clef in illustrator scanned from a major music edition…).

Do you know the book: “Musiknotation. Von der Syntax des Notenstichs zum EDV-gesteuerten Notensatz” by Helene Wanske, printed by Schott? As I know, this is a doctoral thesis which was written collecting many informations from some very experienced music engravers with Schott who began to engrave music before computer engraving became possible. It is a very interesting and deep insights imparting (right word?) book. It is out of print, but I have found it here: http://www.zvab.com/basicSearch.do?anyWords=wanske+musiknotation&author=&title=&check_sn=on

I am very excited about your work and the upcoming notation software as well as this blog with it’s sharing of your working process and progress – thank you so much!

If I do phrase a bit akward at times or use the wrong words: please accept my apologies since I am german…

@Wolfram: Thanks for the recommendation of the Wanske book. I haven’t heard of this book before. We’ll see about getting hold of a copy.

This was a fantastic narrative of your first five months, Daniel– and an incredible birthday gift for me. We’re all cheering you on!

@Dave: Many happy returns!

Happy to see that thing start moving foward for you M. Daniel… but I do not get why Steinberg want you to work on a new software for notation!!! they already have a good one in Cubase 7. Why in the world they want to complicat everything. We will have to convert or get out of one software to get in in another??????? Why they don’t put effort to make thing goes better in Cubase7

@Laurier: The long-term plan is for the technology we are building for a new scoring program to find its way into Cubase, one way or another.

Ok ,so it is the place to give you any ideas or at least some thought about what we love to see or have in this software.

This is a very interesting read, I’m Looking forward to seeing the final product!

Very excited. Notation software definitely needs to catch up to the times, and your work seems promising.

I guess my main problems with current softwares relates to their extended graphic abilities (or lack thereof). Some of the abilities I would like to see incorporated are:

– Easier creation and manipulation of symbols. Take for example the process of creating custom noteheads in Sibelius. One has to import a graphic via the “edit symbols” panel, then access that symbol through the “create new notehead” panel. If sizing needs tweaking you find yourself having to go back to the “edit symbol” panel and then maybe again to the “edit notehead” panel to re-adjust placement relative to the stem… phew…

Same goes for custom accidental shapes, which is indeed possible in Sibelius, but what a clumsy and unintuitive process it is…

– Intelligent definition of start and end point for glissandos, dotted lines etc. so they can be connected to specific notes, or noteheads and move with them if they are transposed. The only line that behaves this way in Sibelius (except for slur of course) is the keypad slide, but you can’t get any other line to stay connected to noteheads even if you move them around vertically.

– Cross staff split chords! This is one very basic ability that Finale has had for years, while in Sibelius if you select only some of the notes in a chord and press CMD-SHIFT-UP/DOWN the whole chord moves to the other staff. Overriding this is, again, a painstakingly unfriendly experience.

And this is probably my biggest wish, as one who uses many aspects of graphic scoring:

A “graphic mode” of sorts, in which one would still have access to all the standard musical graphic elements, but in which those elements could be placed anywhere on the page together with imported graphics which would be placed relative to other objects OR RELATIVE TO THE PAGE! For instance, I would love to be able to place a staff “somewhere” on the page, have the ability to place notes inside it and move them around in the usual way, but without having to deal with musico-structural constraints such as beat-count per measure, consistency in stave-count etc. This would of course be a non-playable mode. I guess I’m talking about a sort of combination vector-based-graphic-design/notation software ability. Today I use Illustrator to manipulate imported graphics from Sibelius, but then something like transposition requires either going back to Sibelius and re-exporting, probably having to deal with respacing issues, or moving everything around in Illustrator, which might be OK for a single note here and there but not for bigger musical tasks.

I know this is a very big request, but I would be very glad to even see steps in the general direction…

keep up the good work!

Very, very good idea! I should think this could be a playable mode: the system could keep separate track of normal-mode spacing and graphical-mode spacing.

I like your ‘biggest wish’ Yoni. How did you get Illustrator to import the score properly (I take it it was in svg format)? I had real trouble doing it successfully and Inkscape was far more trouble-free. This may not be the best place to carry on this conversation so please feel free to email me if you want.

I accidentally replied in a new thread… see below

Can’t seem to find your email address Peter…

So I’ll combine my answer to you with some other blog-related stuff!

I find that EPS graphic export works best with Illustrator. SVG always screws up line thickness etc.

And this is actually another area I would like to see improvements in. Although EPS generally imports flawlessly into Illustrator, the inner coherence of the graphics usually gets screwed up. Most text blocks become fragmented into several “micro-blocks”, and this happens even with single word text blocks like instrument names. So to move them about you either have to create a group from the disparate objects, or erase them all and create a new text object.

Imported graphics also become fragmented in this way once exported to Illustrator.

All in all, I think I can say that there is a largely untapped market of contemporary/experimental composers, who’s needs from a notation software, graphically speaking, far exceeds any of the current software’s abilities.

In my opinion, the lack of progress in this area is strongly connected to the developers (and most mainstream users) need to have everything connected to a sort of linear musical “core” in which every element is deterministically synchronised to every other element, so that everything could be eventually PERFORMED by the a playback engine. This is a fine paradigm of thought for Mozart, Brahms, Schoenberg, Boulez, Reich, Lennon, Coltrane etc. But if you want “free-floating” elements, which are graphically and conceptually unsynchronisable to each other, or if you have a piece of music which reads completely different on each rendition, you have to give up on playability. That’s why, as opposed to Jeff’s idea, I actually believe that such a graphic-mode would gain greater versatility if it where to be disconnected, and thus freed from the constraints of any playback engine.

I did the same Yoni, see my reply below. Thanks for taking the time to respond

As a sibelius user I’m now bitten by fact, the that the sib-format is closed source and proprietary. MusicXML is not the solution, if you have non-trivial scores.

What is your policy: Will the new program more friendly to its users?

@Wulfman: I’ve already written about this in response to earlier comments: our new application will have its own proprietary format, but it will support the import and export of MusicXML (among other formats, presumably), and the scripting support will also most likely allow translators to and from other file formats to be written by technically-minded end users.

Of course email addresses aren’t published here. Thanks for your words though. I found the same thing re line thickness using svg with Sibelius 7->Illustrator. An integrated graphics environment in the notation app would be a great, of course.

This is about Rehearsal Marks.

Managing their individual/collective position in instrumental parts can be time-consuming in Sibelius..

I hope that this task will be more user-friendly next time in what I think of as ‘Steinbach’.

On the playback side of things, I would love to have proper microtonal playback. Sibelius only plays microtones (and only quarter tones) after being processed by a plugin – and it doesn’t work if you have a combination of half-tones and quarter tones on the same staff because of the restrictions of MIDI.

The notation program “Mus2” is making headway in this field:

We are looking forward to a more elegant typeset, and better functionality!

I would really like some form of structural view, where you could see the sections and relating parts, and move parts and sections at will, like Renoise’s pattern matrix:

http://files.soniccdn.com/imagehosting/0c/pattern_matrix_12198_640.jpg

That’s a happy thought!

This is fascinating, Daniel! We follow you and your team inch by inch!! Thank you!!

I would like chord symbols to update automatically all on all tracks. If I change some chord symbols on the guitar track it automatically changes the chord symbols on the piano track.Also nice would be an option to override this behavior on single chord symbols…just in case I want to indicate a different voicing for different instruments.

I wonder if, in ‘Steinbach’, it could eventually be possible to select a number of notes in an ensemble score and simultaneously attach dynamic or technique text, or a line to them all.

It’s not as if the multiple-entry in Sibelius is at all difficult, but what with copying and repositioning in relatively homogeneous passages in complex scores it can be rather langweilig (i.e takes a fair while and can become rather tedious)..

It excites me greatly to see talk of lua scripting. I remember (somewhat randomly) the World of Warcraft addon community absolutely buzzing with activity utilising it. I think probably the idea that is most interesting to me is being able to use addon support to adjust the interface itself and not just the way some program actions occur. I’d love to be able to customise the interface to something that fits me personally or even see people making ‘skins’ for the interface. That may be unrealistic, but I think that kind of flexibility would be an excellent selling point.

Yes! LUA scripting could allow for custom playback enginges, custom spacings… as long as it is integrated tightly, and not just as an addon.

Daniel, good luck with this important project. I realize this may be far too early to consider, but I wonder if you can improve the ease of playback. I’m thinking about the time it takes to set up templates for orchestral playback with something like the Vienna Symphonic Library. There is always a compromise between the engraving function and the playback / composing function of such a programme (as in the case of Sibelius). I wonder how you see the balance going between these things in the new one?

Best wishes,

Rikky Rooksby

vous en êtes où les gars ?

Courage

In Hazzanut (Jewish cantorial music), music is typically notated in “free” time — that is, if you were using a pencil, you would write a bunch of notes reflecting the text that is being said, and then draw a bar line when the singer is to breathe or when the mood changes. At some point, the music might jump into 4/4 for a little while, and then back into free time. In Sibelius, it took a lot of plugins and work-arounds to be able to notate this kind of music. I hope that perhaps the new Spreadberg software will be able to do this more easily. Thanks!

@Yakov: “Spreadberg”… I don’t think that name will catch on! But we are certainly intending to make senza misura and free time music very easy to work with in our new application.

No new from U M Daniel.

I hope you will keep the thing in Cubase like expression map and dynamic map with Vienna Symphonic Orchestra. I hope you will push it at the easy way it will be possible.In cubase dynamic expression are not so easy to understand, maybe I’m a little bit clumsy but it is frustrated when whe have to pass few hours to figure how it work…

Please keep in mind it have to symple as if a wrote it in hand mode.!!!!

Hi Daniel, I’ve just discovered this blog. It’s great to hear that work’s going on.

It’d be great if you considered to make the staff-size changeable for each page.

@Oliver: Don’t worry, variable staff size will definitely be a feature of our application.

What Oliver Laetsch said – and best of luck! I’ve been a happy Sibelius user since 2001, but I can hardly imagine it remaining at the same high level under current ownership.

I like it!

– great to read about the progress, Daniel!

Would it be possible to include the facility to represent parts with multiple repeats (x4 etc) still linked to the score but without amending it? I am thinking of bass or drums parts in a jazz band. I think it can be done with individual parts but you obviously lose the ability to mass-edit and it gets confusing looking after different part families.

Thanks.

@Jeff: This is a requirement we are aware of, certainly.

So looking forward to this! Two things I’ve thought of while using Sib 6 a lot recently that could be improved…when you add grace notes to more than one voice on the same stave it does crazy things with note spacing that just don’t line up. You can change them manually to something that looks reasonable then you go to a part and its messed up again.

Also, could the beam over rests function be linked with time signature like beam groupings are in Sib? Sib 6 has the overall option ‘beam over rests=on/off’ with no option to change mid score.

Regardless, please keep up the great work and keep us posted!

Cheers!

Daniel – As an early adopter of Sibelius (v 1.0), I waited a long time for it to incorporate elements that would be relevant for scoring jazz. Very early on, I met here in NYC with the Finn brothers, and made a suggestion that we be able to apply different colors to different symbols individually. It took *years* to get into the product. Other jazz-related things were slowly incorporated.

One thing that always seemed like a hack to me (sorry if it was your code!) was the way the duration for a chord symbol is represented – I suppose you might call it “slash notation”. They are essentially silent notes, but had the buggy feature of transposing. There were always problems with this approach, causing jazz composers to have to continually highlight, convert to pitched note head, move to the correct placement on the staff, and finally to re-convert to slash notation. An absolute time killer, which is of course the opposite of what computer-based notation is supposed to be.

I mention this to you in the hope that this will be considered from the ground up in your new software, and not be an “add-on” such as if felt in Sibelius.

Thanks for your time —